The Top AI Data Pipeline Platforms for Contemporary Businesses will be covered in this post. Businesses want dependable solutions to automate, monitor, and optimize their data workflows due to the explosive proliferation of data.

- Key Point & Best AI Data Pipeline Platforms for Enterprises

- 1. Prefect Orion

- Orion Prefect Features

- Prefect Orion

- 2. Dagster

- Dagster Features

- Dagster

- 3. Google Cloud Dataflow

- Dataflow on Google Cloud Features

- Google Cloud Dataflow

- 4. Azure Data Factory + Synapse

- Synapse + Azure Data Factory Features

- Azure Data Factory + Synapse

- 5. Databricks Lakehouse Platform

- Databricks Lakehouse Platform Features

- Databricks Lakehouse Platform

- 6. Snowflake Snowpark + Streamlit

- Streamlit Snowflake Snowpark Features

- Snowflake Snowpark + Streamlit

- 7. MuleSoft Anypoint AI Pipelines

- Anypoint AI Pipelines from MuleSoft Features

- MuleSoft Anypoint AI Pipelines

- 8. Confluent Kafka Platform

- Kafka Platform Confluent Features

- Confluent Kafka Platform

- 9. Fivetran + dbt

- Dbt + Fivetran Features

- Fivetran + dbt

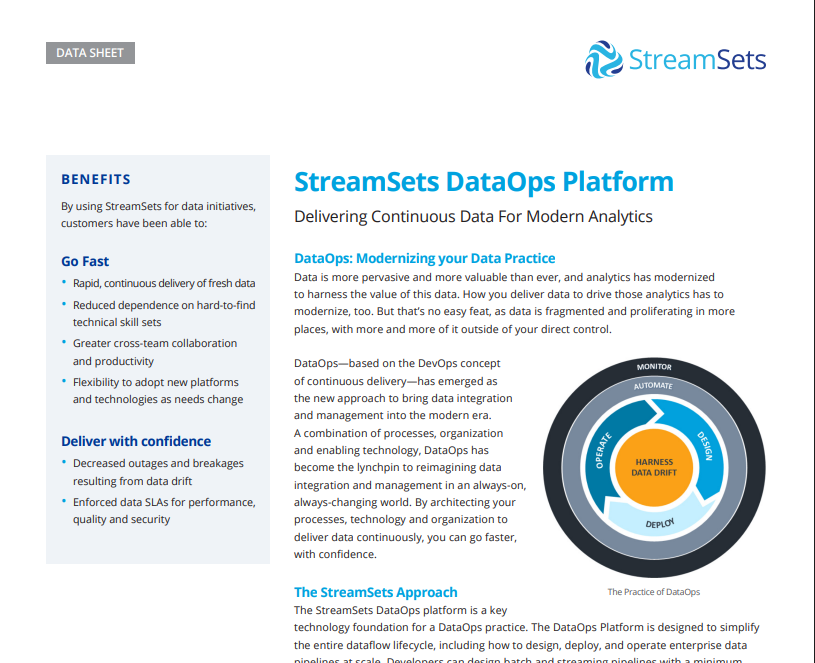

- 10. StreamSets DataOps Platform

- DataOps Platform StreamSets Features

- StreamSets DataOps Platform

- Conclusion

- FAQ

These solutions guarantee clean, organized, and useful data, from real-time streaming to scalable AI integration, assisting businesses in making more informed decisions and effectively fostering innovation.

Key Point & Best AI Data Pipeline Platforms for Enterprises

| Platform | Key Point |

|---|---|

| Prefect Orion | Modern workflow orchestration with real-time monitoring and low-code automation for complex pipelines. |

| Dagster | Data orchestrator that enables testable, versioned, and maintainable pipelines with strong developer tooling. |

| Google Cloud Dataflow | Fully managed stream and batch processing for scalable, serverless data pipelines on Google Cloud. |

| Azure Data Factory + Synapse | Integrated ETL and analytics platform for building end-to-end data pipelines with cloud scalability. |

| Databricks Lakehouse Platform | Combines data engineering, analytics, and AI workflows in a unified, scalable lakehouse architecture. |

| Snowflake Snowpark + Streamlit | Enables building and deploying pipelines and apps directly inside Snowflake for real-time analytics. |

| MuleSoft Anypoint AI Pipelines | Enterprise-grade integration platform to connect apps, data, and AI services for automated pipelines. |

| Confluent Kafka Platform | Real-time event streaming platform for building high-throughput, low-latency data pipelines. |

| Fivetran + dbt | Automated ETL/ELT pipelines with transformation support, making analytics-ready data delivery simple. |

| StreamSets DataOps Platform | Continuous data integration and pipeline monitoring platform for reliable, real-time data flow. |

1. Prefect Orion

Prefect Orion is a cutting-edge platform for process orchestration that makes complex data pipeline automation and monitoring easier. Businesses can effectively build, schedule, and manage workflows because to its user-friendly interface and low-code methodology. Prefect Orion guarantees pipeline scalability and dependability with strong error handling, retries, and real-time observability.

Because of its flexibility and cloud-native capabilities, Prefect Orion frequently ranks among the Best AI Data Pipeline Platforms for Enterprises midway through any data strategy discussion. This makes it perfect for teams that need a combination of simplicity, dependability, and complete visibility into their data operations.

Orion Prefect Features

- Low-code interface for modern workflow orchestration.

- pipeline notifications and monitoring in real time.

- automated retries and strong error handling.

- serverless deployment support that is cloud-native.

- Flexible pipeline components that are reusable and modular.

Prefect Orion

| Pros | Cons |

|---|---|

| Low-code workflow orchestration makes pipeline creation simple. | Can require technical knowledge for highly customized workflows. |

| Real-time monitoring and alerts improve reliability. | Limited prebuilt connectors compared to some enterprise platforms. |

| Robust error handling and automatic retries. | Smaller community than older orchestration tools. |

| Cloud-native and serverless deployment ready. | On-premises deployment requires extra setup. |

| Modular and reusable pipeline components enhance flexibility. | Advanced features may require paid enterprise plan. |

2. Dagster

Dagster is a data orchestrator designed for pipelines that are version-controlled, tested, and maintained. It allows developers to create modular and reusable components by emphasizing software engineering best practices for data processing.

Type systems and observability dashboards are two of Dagster’s powerful developer tools that aid in identifying pipeline problems before they affect production. It facilitates smooth connection with contemporary data ecosystems and streamlines deployment for both batch and streaming data, earning it recognition as one of the Best AI Data Pipeline Platforms for Enterprises.

Businesses gain from Dagster’s methodical, cooperative, and dependable pipeline design methodology that guarantees data integrity throughout.

Dagster Features

- pipelines that are version-controlled, tested, and safe.

- IDE support and developer-friendly tools.

- dashboards for pipeline observability.

- Both batch and streaming data systems are supported.

- Reusable pipeline components are encouraged by modular architecture.

Dagster

| Pros | Cons |

|---|---|

| Type-safe, testable, and version-controlled pipelines. | Steeper learning curve for non-developers. |

| Developer-friendly tooling and IDE integration. | Limited UI customization for non-technical users. |

| Observability dashboards provide insights into pipelines. | Relatively new; smaller ecosystem than Airflow. |

| Supports both batch and streaming pipelines. | Performance tuning may require expertise. |

| Modular design promotes reusable components. | Integration with some third-party services can need custom work. |

3. Google Cloud Dataflow

Google Cloud Dataflow is a fully managed platform that offers serverless, scalable data pipelines to businesses for both stream and batch processing. It makes use of Apache Beam to enable event-time processing, windowing, and customizable transformations.

Large-scale data processing is accelerated by its extensive connection with Google Cloud services like BigQuery and Pub/Sub, as well as its autonomous scaling. Dataflow, one of the Best AI Data Pipeline Platforms for Businesses, lowers operational costs while enabling machine learning workflows and real-time insights.

It is a top option for businesses managing complicated data at scale because of its dependability, minimal processing latency, and unified programming architecture.

Dataflow on Google Cloud Features

- platform that is fully managed for batch and stream processing.

- allows for flexible transformations using the Apache Beam SDK.

- serverless design with auto-scaling.

- deep integration with AI, Pub/Sub, and BigQuery services.

- Real-time analytics with minimal latency for big datasets.

Google Cloud Dataflow

| Pros | Cons |

|---|---|

| Fully managed serverless architecture reduces operational overhead. | Cost can scale quickly for large data volumes. |

| Handles both stream and batch processing efficiently. | Requires learning Apache Beam SDK. |

| Integrates seamlessly with Google Cloud AI and analytics services. | Limited to Google Cloud ecosystem. |

| Auto-scaling ensures efficient resource usage. | Debugging distributed pipelines can be complex. |

| Low-latency real-time analytics capabilities. | Less flexibility for on-prem or multi-cloud environments. |

4. Azure Data Factory + Synapse

An integrated solution for ETL, ELT, and data analytics pipelines is offered by Azure Data Factory and Synapse Analytics. By linking on-premises and cloud data sources with scalable transformations, it enables businesses to create end-to-end data workflows.

By providing serverless and dedicated SQL pools for real-time insights, Synapse improves analytics capabilities.

This combo stands out among the Best AI Data Pipeline Platforms for Enterprises because to its native interaction with Azure AI services, strong security, and governance capabilities. By utilizing predictive analytics and AI-driven insights, businesses can coordinate massive pipelines, facilitating more intelligent decision-making and operational effectiveness.

Synapse + Azure Data Factory Features

- orchestration of ETL and ELT pipelines from start to finish.

- A visual workflow designer that makes creating pipelines simple.

- scalability for enterprise workloads that is cloud-native.

- Azure AI and analytics services integration.

- features for governance, security, and real-time monitoring.

Azure Data Factory + Synapse

| Pros | Cons |

|---|---|

| End-to-end ETL/ELT orchestration with visual designer. | Initial setup complexity can be high. |

| Cloud-native scalability for enterprise workloads. | Can be expensive for large-scale pipelines. |

| Integrates with Azure AI and analytics services. | Limited support for some open-source tools. |

| Strong governance, security, and compliance. | UI can be overwhelming for small teams. |

| Real-time monitoring and logging. | Batch processing may lag in extreme high-volume scenarios. |

5. Databricks Lakehouse Platform

Workloads related to data engineering, analytics, and artificial intelligence are combined into a unified architecture by the Databricks Lakehouse Platform. It enables businesses to create high-performance pipelines by fusing the adaptability of data lakes with the dependability of data warehouses.

Databricks’ integrated machine learning support, collaborative notebooks, and Delta Lake storage enable teams to effectively prepare, process, and analyze data. It facilitates real-time analytics, predictive modeling, and strong data governance and is frequently listed as one of the Best AI Data Pipeline Platforms for Enterprises.

It is perfect for businesses wishing to use big data and AI together because of its scalable infrastructure and smooth connection with cloud services.

Databricks Lakehouse Platform Features

- AI, analytics, and storage in a single lakehouse architecture.

- collaborative notebooks for ML and data engineering processes.

- Delta Lake is integrated for dependable data pipelines.

- support for machine learning and scalable AI.

- streaming capability and real-time analytics.

Databricks Lakehouse Platform

| Pros | Cons |

|---|---|

| Unified architecture for data storage, analytics, and AI. | Can be expensive for smaller teams. |

| Supports collaborative notebooks for ML and data workflows. | Requires technical expertise for advanced features. |

| Built-in Delta Lake ensures reliable pipelines. | Initial learning curve for Lakehouse concepts. |

| Scalable AI and ML workflows. | Onboarding new developers may take time. |

| Real-time analytics and streaming support. | Dependency on cloud provider infrastructure. |

6. Snowflake Snowpark + Streamlit

When combined with Streamlit, Snowflake Snowpark enables developers to create, implement, and view pipelines right within the Snowflake environment. While Streamlit enables interactive dashboards and reporting, Snowpark offers programmable data pipelines in well-known languages like Python.

Without transferring data outside of the platform, businesses may convert unprocessed data into insights that can be put to use. This combination guarantees low-latency analytics, safe data access, and real-time collaboration, making it one of the Best AI Data Pipeline Platforms for Enterprises.

Teams looking for integrated, end-to-end solutions for business analytics, machine learning, and artificial intelligence workflows within a single cloud-native environment may find it very useful.

Streamlit Snowflake Snowpark Features

- Snowflake with Snowpark has programmable pipelines.

- allows for custom logic in Python, Java, and Scala.

- Streamlit provides interactive apps and dashboards.

- analytics in real time without transferring data outside.

- Secure, governed, cloud-native environment.

Snowflake Snowpark + Streamlit

| Pros | Cons |

|---|---|

| Programmable pipelines inside Snowflake. | Limited to Snowflake ecosystem. |

| Supports Python, Java, and Scala with Snowpark. | Streamlit apps may require separate deployment management. |

| Interactive dashboards via Streamlit. | Real-time transformation may have latency for complex tasks. |

| Real-time analytics without moving data. | Requires understanding Snowflake-specific syntax. |

| Secure and governed cloud-native environment. | Costs can rise with large-scale pipelines and compute usage. |

7. MuleSoft Anypoint AI Pipelines

An enterprise integration platform called MuleSoft Anypoint AI Pipelines links data sources, apps, and AI services. To make pipeline building easier, it offers prebuilt templates, reusable connectors, and automated workflow orchestration. Improved data flow visibility, security, and API-led connection are advantageous to enterprises.

MuleSoft, one of the Best AI Data Pipeline Platforms for Businesses, guarantees dependable and consistent integration across intricate systems while facilitating batch and real-time data processing. It is essential for businesses looking for scalable, automated, and intelligent data pipelines because of its capacity to integrate AI-driven insights with current enterprise applications.

Anypoint AI Pipelines from MuleSoft Features

- Apps, data, and AI services are integrated via APIs.

- templates and prebuilt connectors for quicker implementation.

- coordination of automated workflows.

- both batch and real-time data processing.

- enterprise-level monitoring and security features.

MuleSoft Anypoint AI Pipelines

| Pros | Cons |

|---|---|

| Enterprise-grade API-led integration of apps and data. | Pricing is high for small to medium businesses. |

| Prebuilt connectors and templates speed deployment. | Complex for simple workflows. |

| Automated orchestration for batch and real-time pipelines. | Learning curve for new users is significant. |

| Enterprise-grade security and monitoring. | Heavy reliance on MuleSoft ecosystem. |

| Supports AI data workflows with integration capabilities. | Customization beyond templates can be complex. |

8. Confluent Kafka Platform

One of the top event streaming platforms for real-time data pipelines and applications is Confluent Kafka Platform. It offers stream processing, connectors, monitoring tools, and high-throughput, low-latency messaging. Businesses use Kafka to reliably manage massive amounts of data events across dispersed platforms.

For providing real-time analytics, machine learning pipelines, and predictive applications, it is regarded as one of the Best AI Data Pipeline Platforms for Enterprises. Kafka is perfect for enterprises that need continuous data intake, event-driven architectures, and AI-powered insights delivered at scale because of its robustness, fault-tolerance, and scalability.

Kafka Platform Confluent Features

- streaming of events with minimal latency and high throughput.

- robust and resilient design.

- stream processing with ksqlDB and Kafka Streams.

- choices for scalable multi-cloud deployment.

- pipelines prepared for AI and real-time analytics.

Confluent Kafka Platform

| Pros | Cons |

|---|---|

| High-throughput, low-latency event streaming. | Requires significant operational expertise. |

| Durable, fault-tolerant architecture. | Complex setup for beginners. |

| Stream processing with Kafka Streams and ksqlDB. | Scaling requires careful planning. |

| Multi-cloud and on-prem deployment options. | Monitoring and debugging distributed systems can be challenging. |

| Ideal for real-time AI and analytics pipelines. | Not a full ETL/ELT platform; additional tools often required. |

9. Fivetran + dbt

While DBT concentrates on data transformation to enable fully managed ELT pipelines, Fivetran automates data extraction and loading. Businesses can swiftly provide data that is ready for analytics, guaranteeing accuracy and consistency.

While dbt allows for modular, version-controlled transformations, Fivetran manages connectors and schema updates automatically. This combination speeds up decision-making and lowers engineering overhead, making it one of the Best AI Data Pipeline Platforms for Enterprises.

Teams can concentrate on analytics instead of infrastructure, providing timely, clean, and organized data from many sources to enable AI and machine learning workflows without requiring complicated setup or human maintenance.

Dbt + Fivetran Features

- ETL/ELT pipelines that are automated to supply data quickly.

- manages maintenance-free connectors and schema modifications.

- Version-controlled, modular transformations using dbt.

- gives business intelligence data that is ready for analysis.

- smooth interaction with AI workflows and cloud warehouses.

Fivetran + dbt

| Pros | Cons |

|---|---|

| Fully automated ETL/ELT pipelines reduce engineering effort. | Can be costly for many connectors or large volumes. |

| Handles schema changes automatically. | Transformation logic limited to dbt-supported languages. |

| Modular and version-controlled transformations with dbt. | Less control over low-level pipeline optimizations. |

| Provides analytics-ready data quickly. | Reliant on cloud warehouse performance. |

| Seamless integration with BI and AI tools. | Some complex pipelines may require custom coding. |

10. StreamSets DataOps Platform

The StreamSets DataOps Platform is intended for real-time error management, pipeline monitoring, and continuous data integration. It provides visibility into pipeline performance and data quality and supports both batch and streaming data.

Businesses can effectively grow pipelines, guarantee compliance, and automate transformations. StreamSets, which is regarded as one of the Best AI Data Pipeline Platforms for Enterprises, makes it easier to create dependable, maintainable, and auditable pipelines.

Businesses can deliver fast insights, support AI-driven decisions, and maintain consistent data quality across all apps because to its operational dashboards and flexibility, which make it perfect for complex, multi-cloud data ecosystems.

DataOps Platform StreamSets Features

- Data integration for batch and streaming pipelines is ongoing.

- Tools for handling errors, governance, and data quality.

- Scalable multi-cloud deployment and automated retries.

- dashboards for observability to track the condition of pipelines.

- pipelines for businesses that are dependable, maintainable, and auditable.

StreamSets DataOps Platform

| Pros | Cons |

|---|---|

| Continuous data integration for batch and streaming pipelines. | Learning curve for complex deployments. |

| Comprehensive data quality and governance tools. | UI may feel complex for small teams. |

| Automated error handling and retries. | Scaling pipelines may need advanced configuration. |

| Observability dashboards for pipeline health. | Can be expensive for small-scale projects. |

| Reliable and auditable pipelines for enterprise workflows. | Less widely adopted than some older platforms, so smaller community support. |

Conclusion

Selecting the appropriate AI data pipeline platform is essential for businesses looking to optimize operations, allow real-time analytics, and successfully utilize AI insights in today’s data-driven environment.

Each of these platforms—Prefect Orion, Dagster, Google Cloud Dataflow, Azure Data Factory + Synapse, Databricks Lakehouse, Snowflake Snowpark + Streamlit, MuleSoft Anypoint, Confluent Kafka, Fivetran + dbt, and StreamSets DataOps—offers special features suited to various business requirements.

Organizations can create scalable, dependable, and intelligent data workflows that spur innovation, enhance decision-making, and preserve a competitive edge in the rapidly changing digital landscape by utilizing these Best AI Data Pipeline Platforms for Modern Enterprises.

FAQ

What is an AI data pipeline platform?

An AI data pipeline platform is a software solution that automates the flow of data from collection and processing to analysis and machine learning. It ensures data is clean, structured, and available in real time for enterprise analytics and AI workflows.

Why do enterprises need AI data pipeline platforms?

Enterprises handle massive amounts of data from multiple sources. AI data pipeline platforms streamline integration, transformation, and monitoring of this data, enabling real-time insights, predictive analytics, and faster, smarter business decisions.

Which platforms are considered the best for enterprises?

Some of the Best AI Data Pipeline Platforms for Modern Enterprises include Prefect Orion, Dagster, Google Cloud Dataflow, Azure Data Factory + Synapse, Databricks Lakehouse Platform, Snowflake Snowpark + Streamlit, MuleSoft Anypoint AI Pipelines, Confluent Kafka Platform, Fivetran + dbt, and StreamSets DataOps Platform.

How do these platforms differ?

Platforms differ in architecture, deployment models, scalability, AI integration, real-time processing, and ease of use. Some focus on orchestration (Prefect Orion, Dagster), others on cloud-native processing (Dataflow, Synapse), while some specialize in streaming (Kafka) or automated ELT/ETL (Fivetran + dbt).